OS: Apple OS X, IRIX, RedHat Linux.Īpple, SGI, IBM workstations (single, dual and multiple) 250 MHz to 2.8 GHz CPU, 1 GB to 16 GB RAM. One example includes custom IDL code for taking satellite data and converting them into formats suitable for modeling. Custom software: Stand-alone applications or embedded software to translate original scientific data into textures and models. Additional software: RSI Interactive Data Language, Erdas Imagine, Photoshop. Compositing: Final Cut Pro, After Effect. Rendering: RenderMan 10/11, Lightwave 5.6.

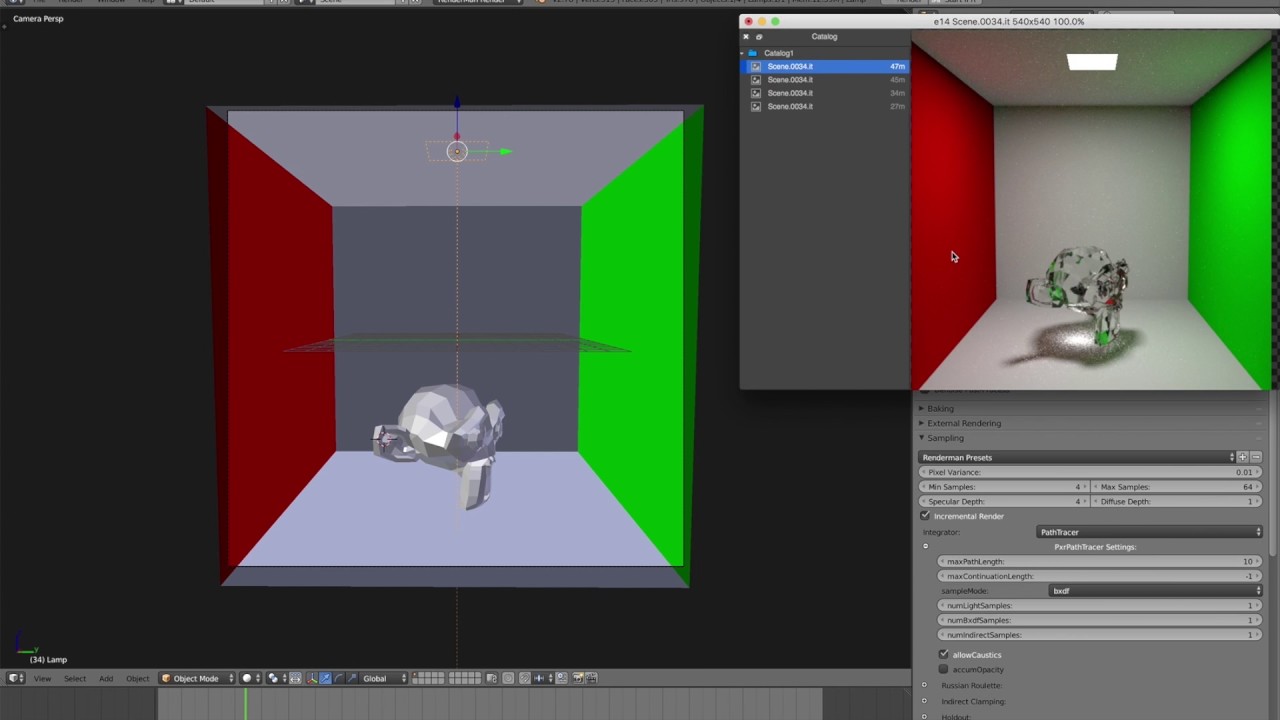

Modeling and animation: Lightwave 5.6 and Maya 4/5. The final sequence in this production begins with the visualization of a launch from Cape Canaveral, Florida, using actual satellite data of the Earth, and then proceeds to recreate two famous photos taken respectively from the Apollo 8 and 17 missions to the moon. Though challenging, these limitations regularly propelled the development of innovative technical and aesthetic treatments. One or two visualizers worked in partnership with scientists and a television producer to create these images, often with heavy constraints on R&D resources. Production highlight: These visualizations began their creative development as individual elements that could be understood by national news audiences in 20 seconds or less. Total production time: approximately two weeks, following months of principal R&D. Average CPU time for rendering per frame: 10 seconds to three days, depending on data complexity and treatment. Rendering technique used most: RenderMan, Lightwave, and Mental RAy on Linux and SGI systems. Scenes using GOES cloud data utilized an automated rotoscoping technique, with infrared and visible light data rotoscoped in a custom-designed process to synchronize the two channels. NASA science teams converted the raw signals into data, and visualizers then turned the data into pictures. Modeling: satellite sensors captured multiple wavelengths of reflected and emitted light. Post-production used After Effects and Final Cut Pro to composite and edit the piece. Satellite and rocket models were designed in both Lightwave and Maya. Visualizers ingested satellite data into Maya or Lightwave they used RenderMan and other tools in a UNIX render farm. Source media for this video were originally delivered in high definition. Each of the visulizations is based on actual scientific research nothing here is mere "window dressing". Here we visualize the Earth using real data from an orbiting fleet of powerful instruments. In an effort to broaden mainstream understanding and enthusiasm for this kind of work, NASA commissioned this video. To the casual viewer, the relevance of uncontextualized scientific visualization can seem arcane at best, irrelevant at worst. But unlike tangible or directly observable data collected by researchers in situ, remotely collected data present conceptual challenges to non-experts. Scientific visualization reveals in data what would otherwise be invisible. In almost all aspects of research about our home planet, space-based data collection is beginning to play a principal role, a role that was impossible prior to the still-dawning information revolution. The disciplines of earth science are just now crossing the threshold of a new era. View "The Edge of History: Earth Visualized"

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed